Hadoop

Highly reliable, scalable, distributed processing of large data sets using simple programming models.

Apache Hadoop

Highly reliable, scalable,

The emergence of Hadoop has changed the data landscape. with Hadoop, you can gain new or improved business insights from structured, unstructured and semi-structured data sources. Large volumes of data can were stored historically or present in siloed departments can be gathered and analyzed in one place at an affordable price. It has highly reliable, scalable, distributed processing of large data sets using simple programming models.

Industries we serve:

- Manufacturing

- Financial

- Health Care

- Insurance

- Retail

Solutions we Deliver:

- Personalized Product Offering

- Fraud Detection and Security

- Compliance and Regulatory Reporting

- Customer Segmentation

- Risk Management

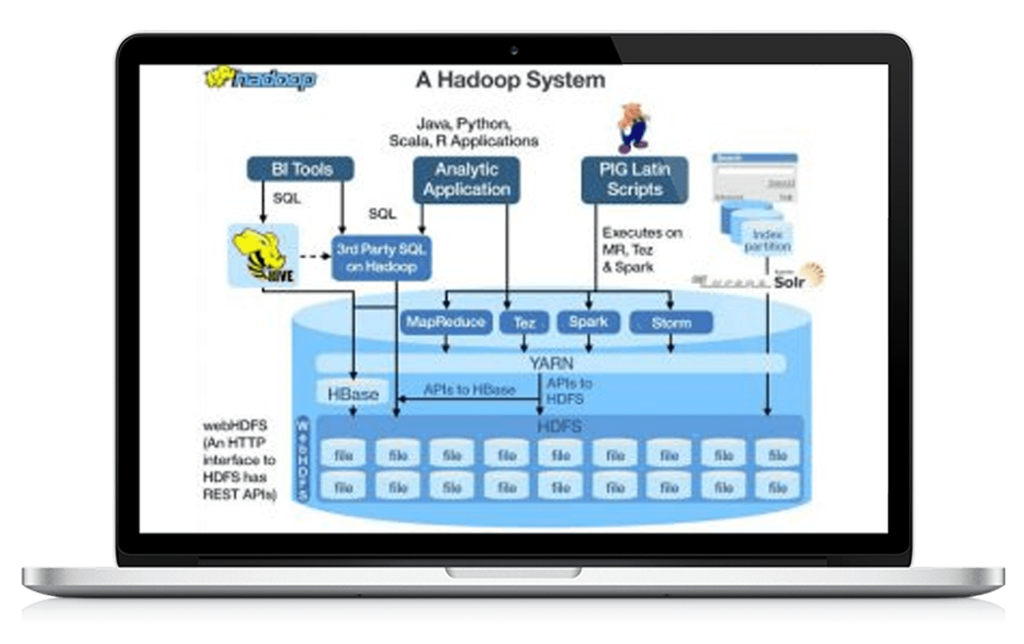

Hadoop Services:

- HDFS

- Map Reduce

- Hadoop Streaming

- Hive and Hue

- Pig

- Sqoop

- Oozie

- HBase

- FlumeNG

- Zookeeper

- Whirr

- Mahout

- Fuse

Apache Hadoop - FAQ's

Apache Hadoop is an open-source framework that is used to efficiently store and process large datasets ranging in size from gigabytes to petabytes of data. Instead of using one large computer to store and process the data, Hadoop allows clustering multiple computers to analyze massive datasets in parallel more quickly.

AOL uses Hadoop for statistics generation, ETL style processing and behavioral analysis. eBay uses Hadoop for search engine optimization and research. InMobi uses Hadoop on 700 nodes with 16800 cores for various analytics, data science and machine learning applications

Features of Hadoop Which Makes It Popular

Open Source: Hadoop is open-source, which means it is free to use.

- Highly Scalable Cluster: Hadoop is a highly scalable model.

High Availability is Provided - Cost-Effective

- Hadoop Provide Flexibility

- Easy to Use