How Gen AI Reduces IBM Sterling Integration Failures

Recurring Integration Failures Don’t Repeat Randomly. Here’s What’s Actually Causing Them Every recurring Sterling Integrator failure is a pattern your monitoring stack

This blog article describe the different use cases and set up for the Sterling Red Hat OpenShift (RHOS) Certified containers. This series will provide you with an overview of the different technology aspects of these containers, and how you can take advantage of the new capabilities provided by the RHOS platform for Sterling B2B Integrator (B2Bi) and Sterling File Gateway (SFG).

This is the second part of two posts that focus on Autoscaling, which is one of the major advantages of running Sterling solutions in a Kubernetes environment. In the previous post, we focused on how to set up Openshift to auto-scale B2Bi/SFG. In this post we will focus on how to configure B2Bi/SFG to enable auto-scalability, the different methods to test the auto-scale functionality with Kubernetes; and summarize what is the business value of using this functionality.

Let’s start this blog post by configuring B2Bi to enable auto-scaling, which is very easy to do.

OpenShift’s HPA (Horizontal Pod Autoscalers) allow us to auto scale the B2Bi containers. In addition, with Sterling B2B Integrator version 6.1.0.0 you get the added Autoscaling support for the server adapters, including the FTP; FTPS; SFTP and C:D adapters. To pre-configure autoscaling on the communication protocol server adapters you need to do the following:

The main driver of this Autoscaling exercise is the ability to send a stimulus to the B2BI RHOC Cluster CPU usage with a conditioned response (Scale Up or Scale Down pods).

Sending this controlled stimulus can be achieved in different ways. This document describes four different approaches to demonstrate OpenShift’s auto scalability functionality with B2Bi containers. The autoscaling method that we’re using is based on CPU utilization, as this is easier to simulate. The four approaches we used to simulate high volumes are the following:

Here’s a general description on how to implement each of these methods:

This is a very effective and simple way to increase the CPU usage. By just accessing the terminal in one of the B2Bi pods, and issuing the following endless loop command:

yes > /dev/null &

Usually calling these commands 3 or 4 times combined with lowering the threshold in the horizontal auto scale configuration to a 40% of CPU time, will allow the pods to scale up within a couple of minutes. Make sure to configure a reasonable number of maximum pods in your Openshift’s HPA.

To allow your system to scale down, you can use the following commands to get the PIDs of the endless loop processes and then stop them. Once the CPU utilization goes down, OpenShift will reduce the number of pods, back to the original number, within a few minutes.

ps -ef grep yes

kill -9 <# Process ID>

Note — Be very cautious while using this method, as it will result in 100% CPU utilization and could potentially affect your whole cluster, so make sure that you terminate these processes or pods.

Using a stress testing tool like Apache’s Open-Source JMeter helps simulate the calls for both file transfer and EDI dvelopment processes, running to simulate high volumes of data and observe how the system reallocates resources internally. This simulation helps establish benchmarks for auto scaling with data and processes that are similar to what the current system is processing. Through this process, ideally, you will create an SFTP Server adapter along with a corresponding user (SFG Partner) and Mailbox. If you need to replicate more intensive CPU usage, you can configure a business process that is triggered as files are uploaded into the SFTP mailbox (Figure 1). This business process can be a simple passthrough or can re-route or transform a file.

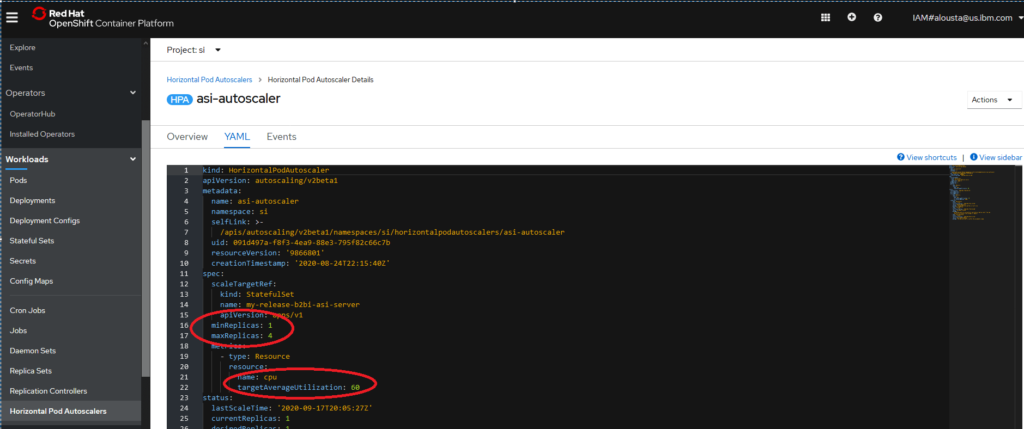

This is the simplest method for a quick view of autoscaling functionality. You can configure the Horizontal Pod autoscaler to a threshold that is below the current utilization rate. This triggers Openshift to immediately scale up the number of pods until the average utilization rate is below the CPU utilization minimum threshold (Figure 2)

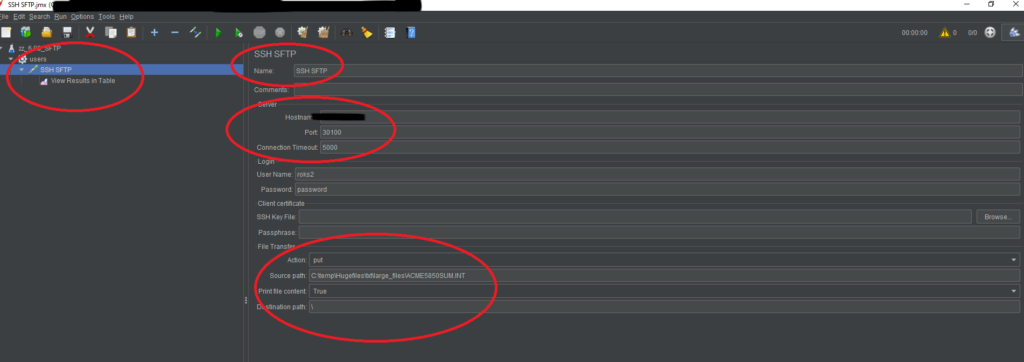

This is another effective method to stress an B2Bi Openshift cluster. You need to set up an SFTP server adapter, a user, and a mailbox to perform this method. Figure 3 shows the setting in a Microsoft Windows 10 Professional environment, but you can use this method on other OS platforms as well.

The master script (launchBig.cmd) setups a loop cycle based on the number of files being written in the folder “Hugefiles”. Within this loop, another script is executed (sendFile). This script will upload a file into the SI SFTP Server Mailbox causing an increase of CPU Usage. As more files are uploaded, the Cluster HPA eventually kicks another pod.

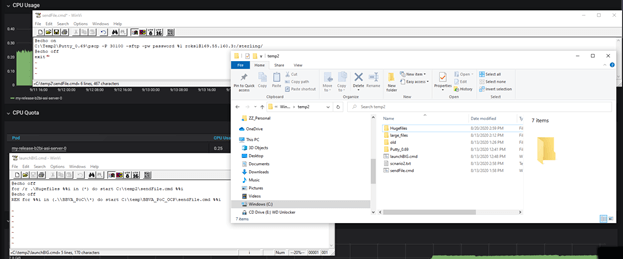

You can see the autoscaling results, once you execute any of the stress methods listed above, on the Grafana or the native RHOS dashboards. Figure 4 shows the average CPU usage of the “my-release-b2bi-asi-server-0” pod. The green area on the graphic shows the CPU usage with the initial pod running. Once the stress test progresses, a second pod’s CPU utilization is represented in yellow, as the workload is now shared by the two pods. After the stress test is finished, there is a grace period where the second pod is still up and running and eventually, the HPA will return to the initial state (equal to the configured minimum pod replicas).

In conclusion, Sterling B2Bi/SFG Openshift certified containers open the door to a whole new set of possibilities, by providing ways to improve and expand its current functionality to behave like a cloud native solution. They enable the adoption of a hybrid cloud model supported by the Openshift platform.

In the last two blogs we covered the autoscaling functionality, which is the most obvious advantage of running in a Kubernetes environment that is smart enough to allocate resources dynamically in the most efficient way, without the limitation of manual processes and static configuration set ups.

Autoscaling provides not only a better allocation of the underlying infrastructure resources, but also reduces any slowdowns in the system due to unexpected peak loads, and it allocates the resources where they’re most needed.

As a result of containerizing the solution, the individual pods are more targeted to specific types of loads and processes which makes them more efficient to run and manage. Containerization also reduces the size of the software components and reduces the time it takes for their loading, readiness and availability.

The B2Bi/SFG Openshift containers need to be more flexible to allow autoscaling, so static remote perimeter servers can now be replaced with more dynamic Openshift services. This makes the system more efficient and it does not require manual intervention to expand the available resources to meet high throughput demand.

So, in short, by demonstrating autoscaling you can demonstrate the following business values to your company:

Browse categories

Recurring Integration Failures Don’t Repeat Randomly. Here’s What’s Actually Causing Them Every recurring Sterling Integrator failure is a pattern your monitoring stack

Transform Legacy EDI with Modern B2B Integration Platforms Legacy EDI systems helped enterprises build reliable B2B communication for decades. But in 2025,

Your Insurance Claims Process Is Losing Customers: 5 Critical Warning Signs In today’s digital-first insurance landscape, customer expectations have fundamentally shifted. Your

| Cookie | Duration | Description |

|---|---|---|

| cookielawinfo-checkbox-analytics | 11 months | This cookie is set by GDPR Cookie Consent plugin. The cookie is used to store the user consent for the cookies in the category "Analytics". |

| cookielawinfo-checkbox-functional | 11 months | The cookie is set by GDPR cookie consent to record the user consent for the cookies in the category "Functional". |

| cookielawinfo-checkbox-necessary | 11 months | This cookie is set by GDPR Cookie Consent plugin. The cookies is used to store the user consent for the cookies in the category "Necessary". |

| cookielawinfo-checkbox-others | 11 months | This cookie is set by GDPR Cookie Consent plugin. The cookie is used to store the user consent for the cookies in the category "Other. |

| cookielawinfo-checkbox-performance | 11 months | This cookie is set by GDPR Cookie Consent plugin. The cookie is used to store the user consent for the cookies in the category "Performance". |

| viewed_cookie_policy | 11 months | The cookie is set by the GDPR Cookie Consent plugin and is used to store whether or not user has consented to the use of cookies. It does not store any personal data. |

Thank you for submitting your details.

For more information, Download the PDF.

Thank you for registering for the conference ! Our team will confirm your registration shortly.

Invite and share the event with your colleagues

IBM Partner Engagement Manager Standard is the right solution

addressing the following business challenges

IBM Partner Engagement Manager Standard is the right solution

addressing the following business challenges

IBM Partner Engagement Manager Standard is the right solution

addressing the following business challenges